I’m Building AI Agents. This Week Made Me Question Everything.

If you’re new here — welcome. I write about what building AI actually looks like from the trenches. Hit subscribe so you don’t miss what’s coming. Now let’s get into it.

I was in a Twitter Space minding my business.

AI agents, building in public, the usual. Someone dropped a link mid-conversation and the whole vibe shifted.

“Anthropic just got blacklisted by the Pentagon.”

I sat up.

Not because I’m a geopolitics guy. Not because I follow military contracts. But because I’m a developer actively building AI agents and something about this story felt personal in a way I couldn’t shake.

Let me tell you why.

A Bit of Context First

I’m building AIDevelopia. Production AI copilots — support bots, email agents, Telegram integrations. Real work, real users, real stakes. Nothing glamorous. Just a Nigerian developer in the grind, trying to ship something that actually matters.

Every decision I make in that codebase has a philosophy behind it. Human oversight. Controlled outputs. No black-box behaviour that I can’t explain to a client. That’s not marketing copy — it’s how I actually build.

So when I heard Anthropic got blacklisted for refusing to remove safety limits from Claude I didn’t just read it as news. I read it as a mirror.

If you’re finding this useful already — share it with one developer in your circle. This is the conversation our community needs to be having.

Here’s What Actually Happened

The Pentagon wanted unrestricted access to Claude for military use. Anthropic said fine — but with two hard lines.

No mass domestic surveillance of Americans. And no fully autonomous weapons no AI making final lethal targeting decisions without a human in the loop.

The Pentagon said “all lawful purposes or you’re out.”

Anthropic CEO Dario Amodei let the deadline pass. His statement was quiet and direct: “We cannot in good conscience accede to their request.”

Trump called them “leftwing nut jobs” on Truth Social. Defense Secretary Hegseth labeled them a national security supply chain risk — the same designation reserved for Huawei and Chinese military companies. Every federal agency was ordered to stop using Anthropic immediately.

$200 million contract. Gone.

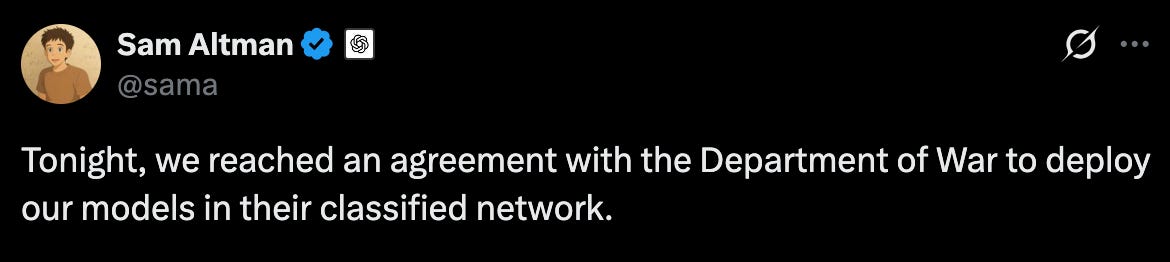

Hours later — same night — OpenAI announced their deal. “All lawful purposes.” Signed.

Then the part that broke my brain: the US military used Claude in airstrikes on Iran that same night. Target identification. Intelligence assessments. Battle simulation. The ban was live. The operation was already in motion. They used it anyway.

Banned in the morning. Deployed in a war zone by midnight.

This is the kind of story that gets buried fast. If it surprised you — like this post so more developers find it.

The Thing That Hit Me as a Developer

Dario didn’t make a moral argument. He made a technical one.

“Current models aren’t reliable enough” for fully autonomous weapons.

And I felt that in my bones — because I work with these models every day.

I know what it looks like when a model drifts mid-session. I know what hallucinations look like under pressure. I know how context degrades when you push a long conversation. The “confident wrong answer” problem is real and I’ve debugged it more times than I can count.

Now imagine that in a system selecting targets. No human checkpoint. Just the model, the context window, and a decision that can’t be undone.

That’s not a political argument. That’s an engineering reality. And Dario was the only CEO in the room saying it out loud.

I’ve been writing about the real side of building with AI — the parts nobody puts in the tutorial. Subscribe if you want more of this, straight to your inbox.

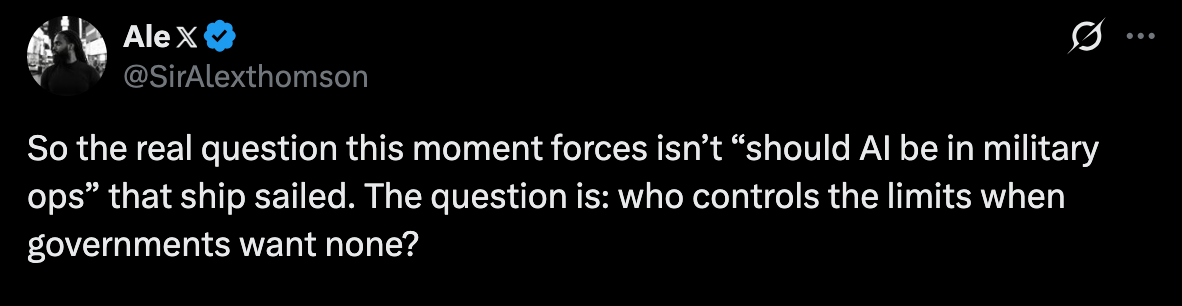

The Part Nobody Is Talking About

Over 430 employees at Google and OpenAI signed an open letter supporting Anthropic’s position. They called it a “divide and conquer” strategy — pressure each company with the fear that a competitor will cave first.

The employees of the companies that signed the deal were publicly backing the company that didn’t.

Outside Anthropic’s offices in San Francisco, people left chalk messages on the pavement. “Keep going.” “You give us courage.” Supporters gathered in Golden Gate Park in the dark.

A company got blacklisted by its own government. And people showed up for it.

I don’t know about you — but that moved me.

Drop a comment below — did this hit different for you too? I read every single one.

What I’m Taking Into My Own Build

I’m not Anthropic. I don’t have $380 billion in valuation or a $200 million government contract to walk away from.

But I do have choices about how I build.

Every agent I ship has a human in the loop somewhere. Not because a client asked for it. Because I believe that’s the right engineering default — especially now. Especially while the models are still drifting, still hallucinating, still losing the thread in long sessions.

This week confirmed something I already believed: the developers who think carefully about limits now — before the pressure comes, before the contract lands, before someone offers you money to remove the guardrails — those are the ones who will still be proud of what they built five years from now

The ones who don’t? They’ll be explaining themselves later. Or they won’t have to, because nobody will ask.

If this resonated — share it. Restack it. Send it to the developer you know who’s moving too fast to ask these questions. This conversation matters.

One Last Thing

The military is still running Claude right now. On classified networks. In active operations. Despite the ban. Because the integration is too deep to unwind with an executive order.

Anthropic built something so essential that even the people trying to destroy them couldn’t stop using it.

That’s the kind of builder I want to be. Not one who bends when the pressure comes. One who builds something so right, so solid, so necessary — that even your enemies can’t let go.

That’s the standard.

What’s yours?

I’m building AIDevelopia — AI agents for real work, with human oversight built in. If that sounds like something you’d use or want to follow, I’d love to have you along for the ride.

And if this piece made you think — share it with the developer in your circle who’s moving too fast to ask these questions.

☕ If this hit you, consider buying me a coffee or joining the Aidevelopia Discord.

👉 Join the crew here →

So if you’ve been waiting for a sign to start exploring AI beyond prompts — this is it.

👉 Try Aidevelopia free for 30 days

👉 Build your own AI bot or community assistant

👉 And join us on Discord — https://discord.gg/PRKzP67M

If you missed my last article, no worries — read it here

Woah, I bet this made you sit up and listen! Keep up your great work, it's good you're keeping up to date with how things are being shaken up and targeted in your field so you can prepare based on your own values and morals rather than being in the dark and being shocked.

This is crazy what's happening, thanks for bringing it to our attention!

So well written